There’s a lot of churn right now in the AI/LLM/Agent space and I’ve found an approach I like for solving production problems/writing production-quality code (one-offs or prototypes/etc. are a lot less rigorously structured). The short version: use multiple LLMs for research and architecture, then guide implementation through a phased crawl/walk/run plan. Here’s how that plays out in practice.

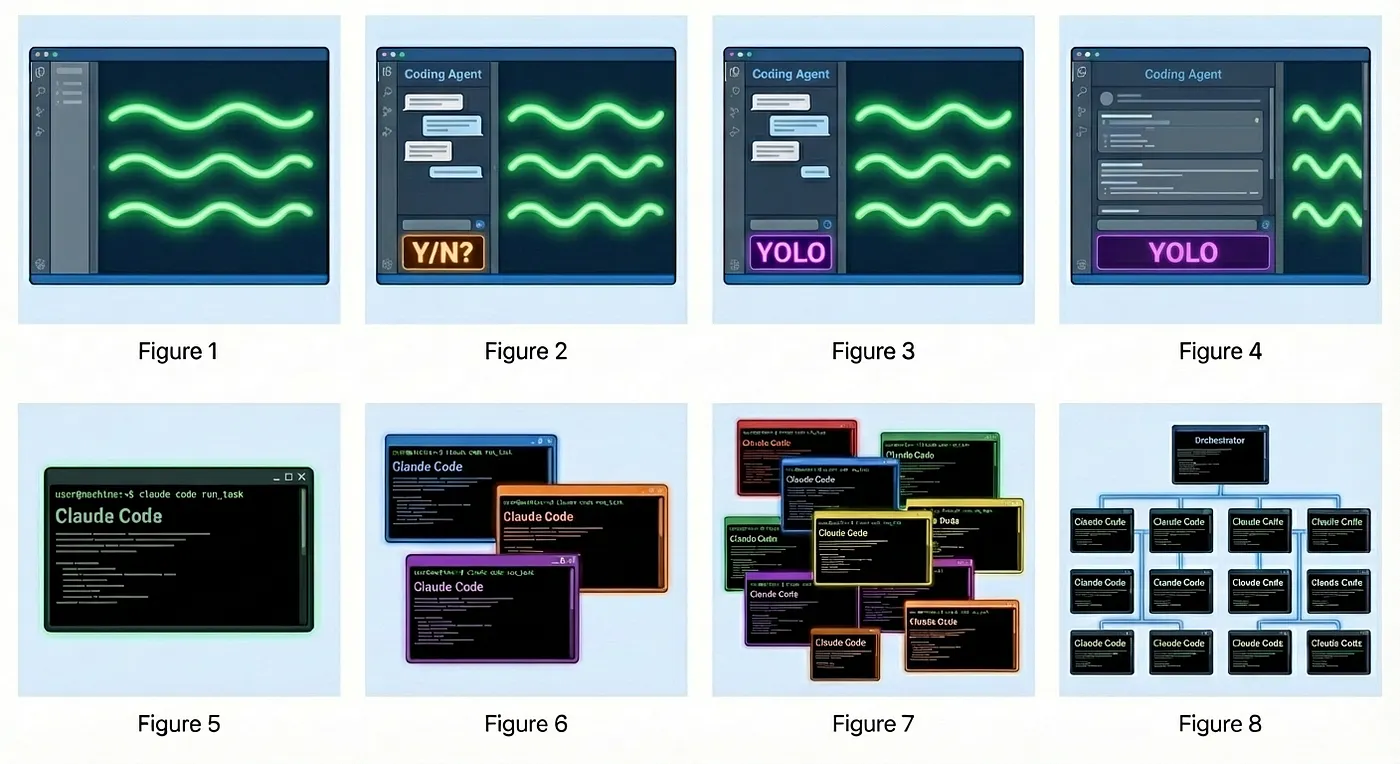

Steve Yegge recently mapped out where we all seem to be in this evolution:

Process

I don’t bother estimating effort anymore. Given that any feature that is technically possible can be done without much human effort, I focus first on “can this thing be done?”

Little things? JFDI.

Anything fairly trivial I just ask the Agent to go ahead and implement. Your definition of trivial may be different than mine, but things like “add a field to this form”, “change the way this existing thing works”, etc. all fall into the “JFDI” bucket.

Things that I wouldn’t have to sit down and plan out, the Agents don’t have to either (and then some, of course!)

Can this even be done?

This is for larger features (“We need to add ‘Sign in with Google’ SSO to our Rails app” kinda stuff), more exploratory work, things I don’t know how to do, or know if they can even be done. Big picture stuff like “I need to ingest 30,000 images per second, how do I do that in GCP?” and smaller things that are technically unknown like tl;dr: you can’t .

The larger the item or the more unknown it is to me, the more rigor I’ll perform. In the example of “How do I add SSO to my Rails app?” I already know we’re likely to use Devise/OmniAuth/etc. so I will skip the deeper probing via multiple LLMs and just ask one of them, and use it to validate my assumptions (“Is Devise the right gem for this or is there a better approach?”) & generate a plan.

For more complex things or areas where I know very little (and thus won’t be able to spot a hallucination) I will open windows to ChatGPT, Gemini, and Claude, and ask them the same initial question. As I iterate through each one, I may go back and edit a prompt (sometimes an LLM will ask me a clarifying question that’s helpful, etc.) or add a refinement.

I’ve found it’s hit-or-miss with each LLM. I can’t discern a huge difference in how one performs over another on architecture, other than Gemini unsurprisingly appears much better in GCP internals.

By asking multiple LLMs, however, hallucinations seem to be more easily caught (2 of the 3 will say the same thing and the 3rd will recommend something different, and typically that one is totally made up!)

After we’ve worked everything out, I’ll ask each one to give me a high-level summary of the architecture we’ve come up with.

If this was “I need to ingest 30,000 images per second” this might be a bunch of cloud components and a placeholder for some ingest program, or if it is “I need to add this functionality to my app” it might be some Controllers, Models, Attributes, DB migrations, etc.

No code, just high level words about pieces and how they fit together.

Architectural Review

I then look at each one and generate a final architecture document. This usually involves choosing pieces of each, although sometimes one is a clear winner without any substantial deficiencies. Often, though, there’ll be some weeeeird stuff that I need to excise.

Plan Creation

The key constraint for the LLM is a crawl/walk/run phased approach. The idea is to work from the outside in: crawl gets the skeleton right with no real implementation, just structure and relationships. Walk makes it actually work, as simply as possible, with tests. Run finishes it out. The value is catching wrong assumptions while they’re cheap to fix, before the LLM has built a ton of code on top of them.

I paste the amalgamated architecture into each LLM and say:

This is a high level architecture of Give a description of the problem to be solved. . We are going to implement this with I currently like Claude Opus 4.5; 4.6 seemingly gets stuck in infinite loops of uncertainty; GPT 5.4 is good, too, but I’ve been happy with Opus. Gemini 3.1 seems to not give good results unless I’m working on Android or GCP stuff. Agent.

We need to take a “Crawl, walk, run” approach as to not overwhelm the Agent and also to expose any uncertainty and refactor before we write a lot of code.

Crawl: skeletonize things; our goal is to make sure the plan and architecture look right. No implementation but class definitions, relationships, attributes. Just the framing of the implementation. Ensure assumptions make sense.

Walk: Flesh out the implementation, do the simplest thing that could possibly work. Write tests to exercise this functionality and validate deeper assumptions.

Run: Full implementation.

Your task: take the architecture document and break it into small, discrete chunks, and plan out the entire thing. We use the crawl -> walk -> run approach so we can keep the end goal in mind and not back ourselves into a corner with shortsighted thinking. We should balance this need with reasoned pragmatism so we don’t contort ourselves into a pretzel over-building in crawl/walk, though.

I review the implementation plan. Nowadays the plan is almost always good enough to just get started with but every now and again I’ll see a totally bonkers assumption that I’ll have to correct.

By having the agent take the crawl -> walk -> run approach, I seem to get better results because it needs to start thinking about the work with an eye toward “run”, with minimal back-tracking. If I try to one-shot a non-trivial feature/implementation, I’ll often get deep into it only to find out that X is made up, Y is wrong, etc. and we’ll have to burn a ton of tokens backing out things or changing the architecture.

This appears to be fairly token-cost-effective as I want the Agent to spend most of its time coding and not a ton of time backtracking or asking me questions a cheaper model figured out.

Implementation

I chunk this up into smaller pieces and put them into Markdown files (#architecture.md, #crawl.md, etc.)

I then have the Agent do something like:

Please read @AGENTS.md and @architecture.md.

We will be implementing @crawl.md, so start the planning process and start when ready.

I use Cursor, which will handle creating individual tasks for the agent to follow (essentially managing the TODO list) so we can restart if it breaks, etc.

@AGENTS.md looks something like:

# Agent Instructions for $Project

## Project Overview

This is **$Project$**, ....

## Technology Stack

- **Language:** ...

- **Framework:** ...

- **UI:** ...

- **Code Quality/Linting:** ...

- **Testing:** ..

## Project Structure

- `builds/` - where builds go

- `...` - so on

## Development Commands

# Day to day development

RUBY_ENV=dev ruby foo.rb

# Testing

RUBY_ENV=test bundle exec rake # Run tests

RUBY_ENV=test bundle exec ruby -Ilib:test test/path_to_your_test.rb # Run a specific test file

# Code Quality

bundle exec rubocop # Run Rubocop linter

bundle exec rubocop -a # Auto-fix safe issues

stylua ./ # Format lua files (only needed if you modify any lua code)

luacheck ./ # Lint lua files (only needed if you modify any lua code)

# Deployment

./scripts/foo.sh

## Key Documentation References

`README.md` should contain all of the helpful information primarily for developers.

## Coding Standards & Conventions

### Code Style

- Follow Ruby style guidelines (2 spaces for indentation, snake_case for variables/methods)

- Write clean, self-documenting code with comments only when necessary

- Add YARD documentation to methods and classes

### Testing

- Write unit tests wherever possible/reasonable.

- Ensure test coverage for new features

- Tests are located in `..`

- We use minitest/minimock

## Before Starting Any Task

1. **Understand the context** - Ask clarifying questions if you can't find the answer in the docs or code.

2. **Check existing patterns** - Look at similar implementations in the codebase

3. **Plan the approach** - Consider architecture, dependencies, and side effects

4. **Identify affected areas** - Determine what files/modules will be modified

## After Completing Any Task

**CRITICAL:** Always run these verification commands in order to ensure nothing is broken:

RUBY_ENV=test bundle exec rake

bundle exec rubocop -a

### Command Details:

- **`RUBY_ENV=test bundle exec rake`** - Runs all tests. All tests must pass.

- **`bundle exec rubocop -a`** - Runs Rubocop static analysis. Must pass all rules in `./.rubocop.yml`.

**Do not consider a task complete until both commands pass successfully.**

### Update the Changelog

After making any changes, update the `CHANGELOG.md` file:

1. **Add a new entry** at the top with today's date & VERSION (format: `# YYYY-MM-DD MAJOR.MINOR`)

2. **Document the changes** with bullet points describing:

- New features added

- Bug fixes

- Dependency upgrades

- Breaking changes or migration notes

- Configuration changes

3. **Be specific** - Include file paths or component names when relevant

4. **Use clear language** - Users should understand what changed and why it matters

**Example format:**

# 2026-03-11

* Upgraded Rails

* Made a new Dockerfile

**Do not update the changelog for:**

- Trivial changes (typo fixes, formatting)

- Internal refactoring that doesn't affect functionality

- Documentation-only changes (unless significant)

## Common Tasks & Guidance

### Adding a New Feature

### Adding Dependencies

- Add to `Gemfile`

- Prefer stable, well-maintained libraries

## Error Handling & Debugging

- See `docs/ERROR_HANDLING.md` for patterns

- Use proper logging (see `docs/LOGGING.md`)

- Catch and handle exceptions gracefully

- Provide meaningful error messages to operators (via the Notifier)

## Security Considerations

- Never hardcode sensitive data (API keys, credentials)

- Use secure storage for tokens/credentials

- Follow Ruby security best practices

## Git Workflow

- **Main branch:** `main`

- Commit messages should be clear and descriptive

## Getting Help

- **Documentation:** Check `docs/` folder for the relevant sections. Update them when you make changes to relevant code paths.

- **Changelog:** Review `CHANGELOG.md` for recent changes

## Important Notes for AI Agents

- **Always verify before assuming** - Check actual code rather than making assumptions

- **Preserve existing patterns** - Match the existing code style and architecture

- **Run all validation commands** - Tests, rubocop must all pass

- **Documentation is your friend** - The `docs/` folder contains detailed guidanceEpilogue

I’d say 99% of the time this does exactly what I want with minimal backtracking and disruption. The other 1% is usually one of two things: an assumption baked into the architecture turns out to be wrong (a gem doesn’t do what we thought, an API doesn’t exist the way we expected, etc.), or the agent gets tangled up and starts spinning its wheels. The first almost always surfaces during crawl, which is the point: no real code has been written yet so the cost of being wrong is low. The second I handle by killing the context and restarting from the last checkpoint, which is exactly why breaking work into those discrete files (#crawl.md, etc.) & using Cursor’s #TODO/planning mode is worth the overhead.

I’m quite happy with this process! What’s yours?