A Few Single-line Stories in Everyday Frustration

“Hey Siri, what’s the weather tomorrow?”

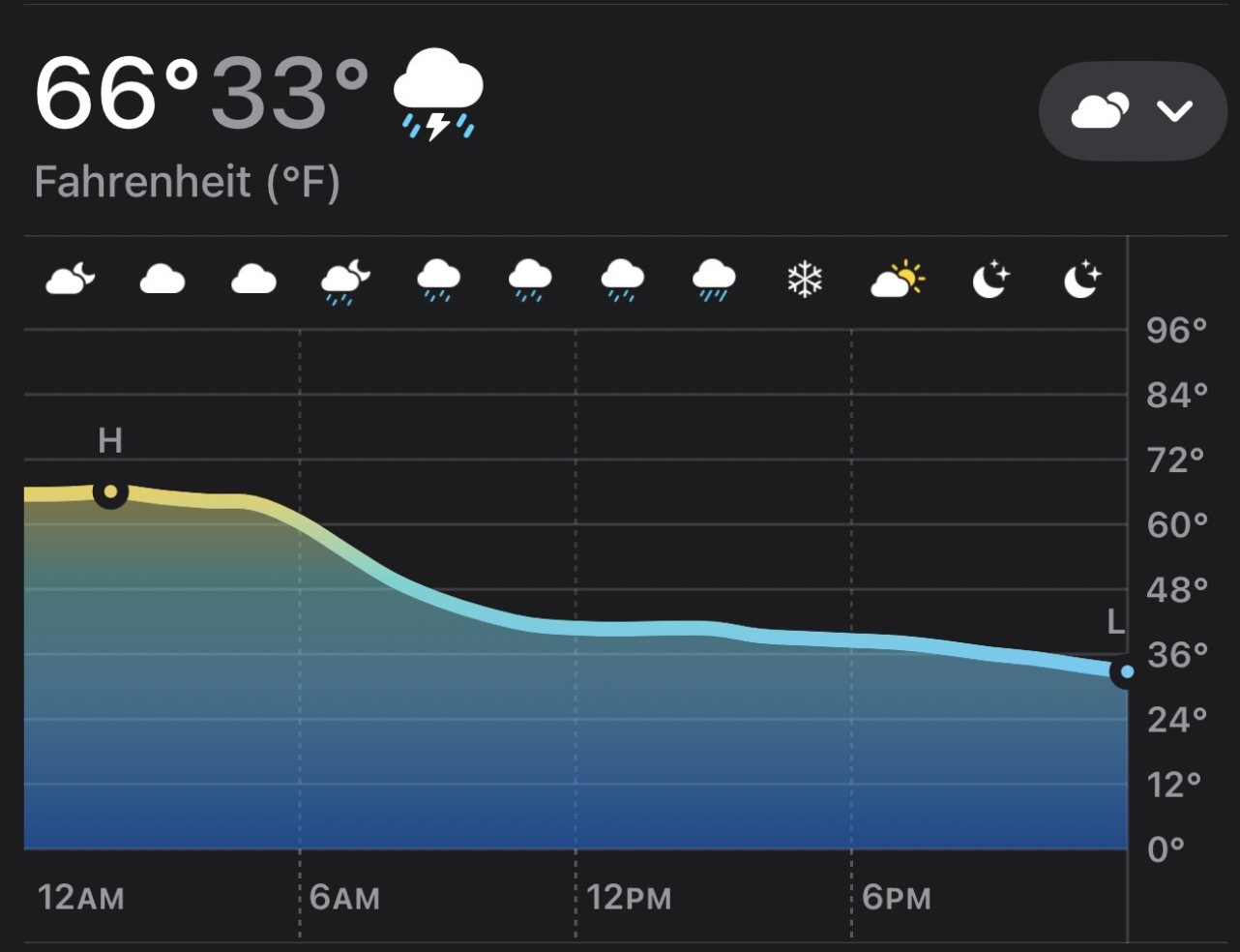

Looks like thunderstorms tomorrow. The high temperature will be 66 degrees, and low will be 33.

So you go outside with an umbrella and a t-shirt only to realize that it’s nearly snowing when you’re out. Why? Because of a chart that looks like this:

“Hey Siri, play Toxic”. And then “Toxic” by Britney Spears plays instead of the one by BoyWithUke that I intended.

Why? I think it’s due to some deep unintentional (and some intentional) failures in Apple. Apple’s “Brand Promise” is that Apple products are market leaders in quality and innovation, new products are revolutionary, and everything should “just work”. This promise has served them well but placed Siri right in the middle of a no-win situation. Siri Sucks because of a perfect storm of culture, people, and process at Apple.

Siri’s Tale of Woe

Lack of Training Data for Models

Apple has long-since touted their privacy-first stance. One of the reasons why I’m all-in on Apple’s ecosystem is I do actually believe that they take this more seriously than their competitors. I don’t want to be the product being sold, so I deeply appreciate this and put my money where my mouth is.

This privacy-first stance has been claimed as a major reason why Siri is so bad: models won’t have as much training data to learn on. I deeply question this - commercial models like ChatGPT and Anthropic have not obtained special, privacy-invading data (Google does get lots of Android-sourced data but it’s not obvious to me this has helped Gemini in a substantial, differentiable way).

The blocking of Siri improvements due to privacy concerns feels like an organizational immune reaction, a reflex, and doesn’t feel like a considered choice, especially when there are so many ways to improve Siri that still uphold the brand promise.

Perfectionism’s Double-Edged Sword

Apple’s “perfectionism”, instilled by Steve Jobs, has resulted in obviously amazing products. But, unmoored by Steve’s intuition and discernment, it resulted in critical failures:

- Seeking a How “thin and light” a laptop must have been to have appeased Jony Ive back in the day isn’t documented, unfortunately. laptop resulted in the disastrous the butterfly keyboard, and the same thinking to 2014’s “Bendgate”.

- AirPower was a rare public failure for Apple. Yes, having an all-in-one wireless charging station with “place anywhere” technology is cool, but the quest for perfection resulted in the laws of thermodynamics smacking down Apple’s ambitions.

- Why did Steve Jobs need to say “Don’t hold it that way”? Because it looked better. The solution of a different band design didn’t look as symmetrical.

- The 2013 “Trashcan” Mac Pro may have looked perfect on paper, but it clearly didn’t work in practice.

Apple’s stance that things “just work” has led them down a rigid path in favor of traditional AI/ML and algorithmic decisions for Siri. This in and of itself is not fatal, however it’s hard to agree with Apple’s decision to accept a Siri that predictably doesn’t work on everyday tasks.

This feels like the major disconnect here. Apple chose predictable performance at the expense of functionality over (carefully) introducing much better product experience coupled with unpredictable inconsistent performance. I can see in some sense how a “things should just work” perfectionist perspective could lead someone to arrive at this position. Yes, Siri successfully told me the weather. It works. It might not be as advanced as Google’s, but Google’s makes an incorrect guess at times. On balance, at Apple, they clearly believe the former is the Right decision.

I’m shocked that anyone at Apple leadership couldn’t see how big a problem this is. Siri is embarrassingly bad at things it really ought to nail 100% of the time, and the brand damage there seems more significant than Apple seems to understand.

Organizational Dysfunction

Tim Cook’s leadership has been mixed. Clearly, as scored by market cap he’s done great, but I think in 10 years he’ll look like Apple’s Steve Ballmer.

Apple’s “Liquid Glass” archiecture and the overall “iPhone, iPad, Mac” operating systems “needing to look and feel the same” is clearly a result of someone looking at macOS and going “eww, why can’t it be like iOS? Why do we have separate iOS and macOS software teams? They should all be in one group!” That the departure of Alan Dye was regretted by Apple but celebrated by the community tells you there’s a substantial disconnect from reality and what actually works on the devices. This same kind of disconnect kept Siri from being viewed - at the leadership level - as the 5-alarm fire it actually is.

Apple Everyone except a small few missed it, so they could be excused, but just compare how Google quickly re-oriented with Gemini and went from distant to trading first place with the rest of the top 3 providers to Apple’s … current… whatever they are doing. . It took far too long for John Giannandrea to be shown the door. Everything I read suggests that he’s capable of leading AI efforts techically, but couldn’t find a way to be successful within Apple to do so. That is a failure of senior leadership, especially Cook. It sounded like a mess. If this is to be believed, part of it was this:

Apple apparently weighed up multiple options for the backend of Apple Intelligence. One initial idea was to build both small and large language models, dubbed “Mini Mouse” and “Mighty Mouse,” to run locally on iPhones and in the cloud, respectively. Siri’s leadership then decided to go in a different direction and build a single large language model to handle all requests via the cloud, before a series of further technical pivots. The indecision and repeated changes in direction reportedly frustrated engineers and prompted some members of staff to leave Apple.

Then they (rightly, in my view) went back to a split:

“I understand this perception of bigger models in data centers somehow are more accurate,” he told Ars Technica in an interview, “but it’s actually wrong. It’s actually technically wrong.”

“It’s better to run the model close to the data, rather than moving the data around,” he continued. “And whether that’s location data — like what are you [the user is] doing [such as] exercise data, what’s the accelerometer doing in your phone — it’s just better to be close to the source of the data, and so it’s also privacy preserving.”

Yes, Siri’s architecture is apparently pretty bad. But that’s all fixable.

I absolutely agree that specialized on-device models (like the ones in the photo pipeline) are key; however it’s shocking to me that Siri appears to continue to be a giant monolith that cannot take advantage of on-device algorithms or models. Was this just a technical problem, or did ineffective leadership result in an organization incapable of deciding and executing on a direction?

How to Fix (Some Of) This

Lack of Training Data & Privacy

A company that has figured out how to safely leverage health data can certainly figure out how to securely manage privacy in the age of AI. You can’t tell me out of over 1 billion iPhone users Apple can’t find enough people to opt-in to AI training-data collection? They have a large audience using health features; in early Apple Health research over 400,000 people enrolled in the first Apple Heart Study and over 350,000 people are currently contributing to research studies via Apple Research app.

Apple should make it much easier for people to opt-in to helping Apple in their AI efforts. Everyone knows what AI is and if made clear what kind of information this is (and more importantly, why it will pay off to give it) I suspect a sufficient amount of data can be collected to greatly improve Siri.

Cultural Perfectionism

Apple’s stance on perfectionism could’ve been moderated by simply asking: “What’s worse: Siri that everyone thinks is garbage and fails all the time, or a small on-device LLM to determine intent that might get it wrong every now and again?” Apple has never been the leader in certain software decisions - I wouldn’t expect them to have invented the transformer model - but I would’ve expected them to try and take more, but smaller risks, on the Siri side.

There’s a wide gulf between “Siri is entirely predictible and algorithmic [and thus fits our definition of shippable]” and “unleash an LLM on your iPhone”. When I ask for the weather tomorrow, do I mean what’s literally the high temp and the low temp? It feels like product managers at Apple don’t use Apple products very much because how could they leave this broken for so long? Or, they’ve tried and tried and something out of their control is blocking them from solving this kind of problem. From the outside it’s impossible to know. Could you look at other on-device signals, like: Do I then ask Siri “What’s the weather at 2pm?” Do you see me opening up the weather app, tapping into tomorrow’s detail?

When I ask an ambiguous question like “Hey Siri, play Toxic”, should Siri/Apple Music jump to Britney Spears or can it do an on-device check first? Is it more likely I meant the song I have listened to recently, have in my library, and starred it, or is it more likely I meant a pop star I hardly listen to? What’s more likely? Apple probably already has the data and the algorithms to make this decision better, yet they’re not using it.

Do more people listen to Britney Spears than BoyWithUke? Probably. Absent any additional context, Siri made a reasonable, predictable, correct decision. But Apple has ready access to lots of additional context that would tell them (if they were looking) that Siri made the wrong choice here.

All of those are signals that Siri successfully responded to my request (probably ticking up some internal metric that makes Siri look successful) but failed at responding to my intent. LLMs are exceptionally good here, and it wouldn’t take a gigantic expensive trillion parameter model to put two and two together to realize that maybe there’s a fire here.

Org Structure

Apple is famously “functionally oriented” It’s somewhat telling that “Siri” appears exactly 0 times in that article - instead of having a “Pages” team, they have a Machine Learning org, Software Engineering org, etc.

Siri has ping-pong’d from different parts of Apple since the acquisition back in 2010. First, it lived under Eddy Cue as a “Service”. Then, moved into Software Engineering, and with John Giannandrea’s hiring, into its own silo under “Machine Learning & AI Strategy”. After Giannandrea’s ouster, it now is back within Software Engineering under a new position - VP of AI.

Within Software Engineering is where it should’ve been all this time - Siri is uniquely a cross-cutting function. It needs to support every app and service on the device, and provide for APIs and hooks for 3rd party developers. That’s a huge responsibility and requires a ton of internal coordination and decision-making.

If Siri had stayed within Software Engineering then Craig Federighi could’ve organized the teams internally to focus on “Ok, how do we safely and securely get Siri the information it needs to answer ‘What’s the weather tomorrow?’ in the way the user wants?”

Even if “everyone” agreed Siri was broken, nobody other than Tim Cook could decree everyone to drop everything and integrate with it. And there’s no evidence he did until it was far too late to do anything about it. The alternative suggests that leadership didn’t know it was an issue. Are they not listening to customers? Are they gathering and being measured upon the wrong metrics? What allowed Siri’s terrible performance to fester for so long?

Leaving Hope

Apple kept making the choice to ship a predictably terrible product than one that’s unpredictably brilliant. I get the organizational challenges and philosophy that got them there, but I really hope this change is an opportunity for them to figure out a pragmatic way forward that gives customers the experience they want while maintaining the brand promise of “privacy and things ‘just work’”. They have all the resources necessary to do this - it just takes the willingness to make it so.