A Few Single-line Stories in Everyday Frustration

“Hey Siri, what’s the weather tomorrow?”

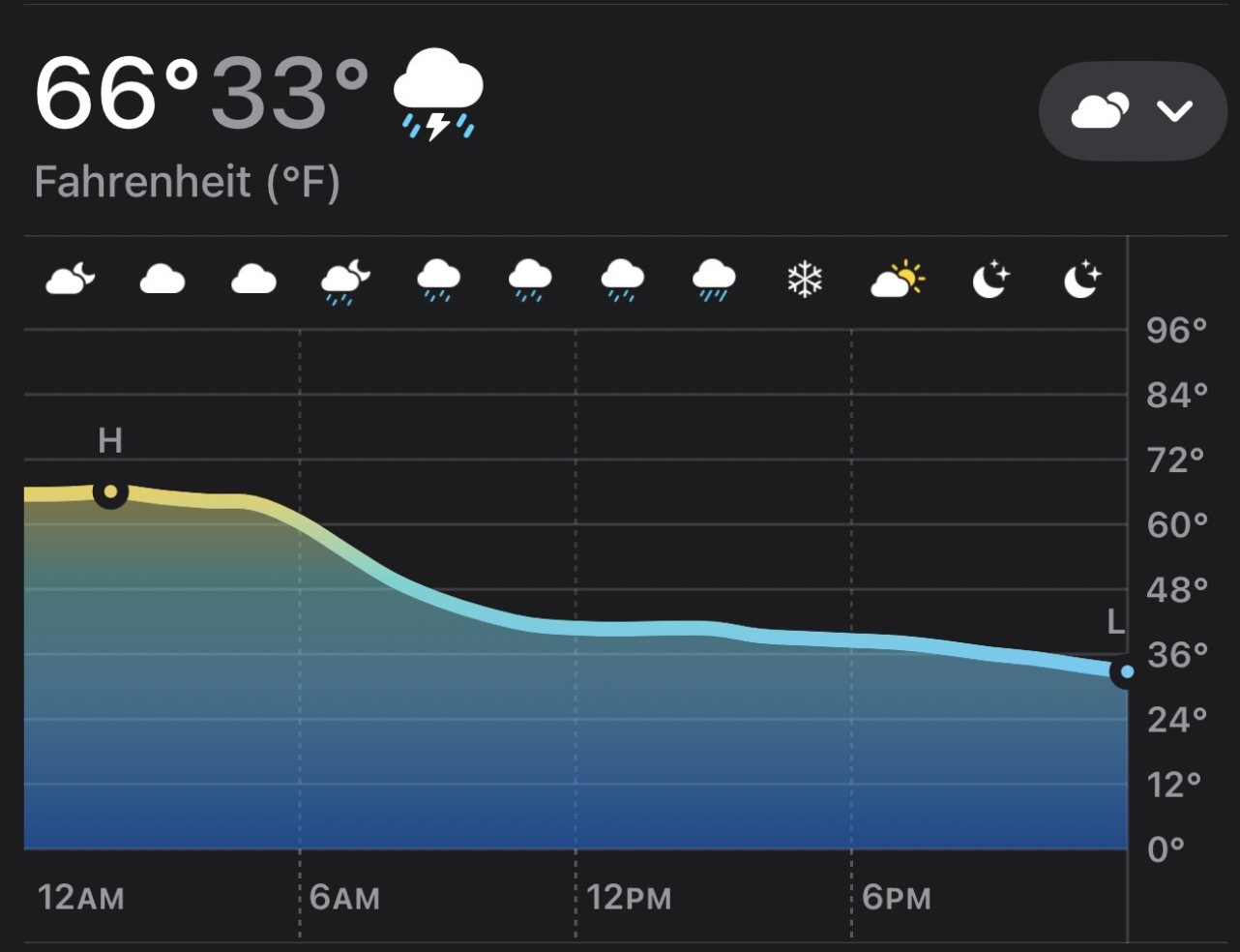

Looks like thunderstorms tomorrow. The high temperature will be 66 degrees, and low will be 33.

So you go outside with an umbrella and a t-shirt only to realize that it’s nearly snowing when you’re out. Why? Because of a chart that looks like this:

“Hey Siri, play Toxic”. And then “Toxic” by Britney Spears plays instead of the one in my library that I already listened to today (BoyWithUke)!

Or when one of my children says “Hey Siri, play «some children’s show music»” and eventually it starts playing albums that are entirely in German. Yes, technically speaking, someone authored an album of this show’s music and it happens to be in German but - can I tell you? - this is absolutely not what anyone meant when they said “Play music from Gabby’s Dollhouse”.

I often remark, maybe just to myself: doesn’t anyone at Apple use this product? Because it certainly feels like the zillion papercuts I get every day from these things ought to be solved with a little bit of old fashioned Product Design (you know, not how it looks, but how it works). That nobody has bothered to improve any of this in well over a decade seems to imply, to me, that either nobody cares or the people that do can’t effect change.

Why? I think it’s due to some deep unintentional (and some intentional) failures in Apple. Apple’s “Brand Promise” is that Apple products are market leaders in quality and innovation, new products are revolutionary, and everything should “just work”. This promise has served them well but placed Siri right in the middle of a no-win situation. Siri Sucks because of a perfect storm of culture, people, and process at Apple, a company that excels at hardware but struggles with software.

Hardware ain’t Soft

Apple is really great at continuously improving their hardware lines. The original iPhone didn’t have:

- Cut and paste

- The ability to record video

- A front-facing camera

- An App Store

It now has:

- An edge-to-edge screen

- FaceID instead of a Home button

- A dozen cameras on the back

The iPad went thru similar evolutions. iPod had buttons, then the clickwheel, then a color screen, etc. etc.

The MacBook line had a hiccup in the There was that butterfly keyboard thing and a few other mis-steps, but zoom out and it’s clear the hardware trend is up and to the right. but has recovered and M-series chips that keep getting better and better.

You can also point to the Watch, AirPods, etc. as things that got a lot better.

What’s interesting is that the hardware continues on a steady, inexorable improvement path.

This is not easy. This is incredibly difficult, and if Apple did only hardware and made iPhones that ran Android and MacBooks that ran Please don’t. , they would still be doing astonishing things. Apple’s cadence here is both amazing and hardly replicated across the industry. It takes amazing talent (Apple Silicon team is astonishing), amazing strategy (many of Apple’s hardware iterations first appeared on lower-volume devices so they could figure out how to scale it up without needing to turn around and make a billion of them on an annual make-or-break cadence), and amazing focus.

I think Apple’s hardware improvements are (mostly) due to two things:

- People. Tim Cook is by all accounts an operational expert. He is credited with turning Apple’s supply chain into the best in the world, moving manufacturing to JIT systems, and streamlined distribution. He is known for his intense focus on details and operational metrics, focused on minimizing waste in production, and has hired/acquired amazing hardware design talent (especially in Apple Silicon).

- There is a very clear build-measure-learn feedback loop with hardware production. The next M-chip is either faster or it isn’t. The FaceID camera has a clearly defined mean-time-to-failure, false-accept rate, etc. They can say “FaceID works better than TouchID”. Hardware has a very clear definition of “better” or “worse”, and Apple leadership drives decisions off of these metrics.

Software? Software products? Software (both coding and the product itself) is a whole different thing. Yes, there are various metrics people have come up with to measure software quality, and there are attempts at ways to measure product efficacy, but for the most part none of them measure how “good” the product is.

I can measure how long it takes some algorithm to complete, and if it’s within such-and-such miliseconds of the target we can use it, but that doesn’t tell me if the user found the search results they were looking for. I can tell you how often this app crashes, but I can’t use in-app metrics to tell you if people love it and can’t live without it. I can tell you that Siri responded successfully (HTTP 200 OK) 1.5B times yesterday but I can’t easily tell you how many of those 1.5B requests Siri did what the user wanted.

Or put another way: it’s hard to measure Siri’s failure, but it is easy to assess it. Steve Jobs famously was that “assessment” function. He would use a thing and say: “This is garbage” and get folks to fix it. You can hire the best developers in the world but if nobody is looking at the product it’s impossible to know if it’s “working” (other than people keep buying it, but that’s a lagging indicator of quality).

Software is Hard

I’ve talked a lot about how software isn’t They are not comparable; I’m not saying software is more difficult to produce than hardware. It’s just different. Yet people keep trying to turn software into hardware. Hasn’t worked yet. . Software suffers from diseconomies of scale. You can build anything you dream of, laws of physics be damned! It’s also really difficult to predict if it will work or when you’ll be finished.

For Siri, the No, it’s not NPS is what, exactly? Apparently, according to David Pogue:

Apple’s numbers showed that by 2024, people were asking questions of Siri 1.5 billion times a day, but it had no number on how many complained about it.

This is, uh, not great. To put it mildly. Number of successful Siri requests is not the same as “Siri is working as desired.” How do you know if Siri is capturing my intent if you aren’t gathering that kind of data? How do you know if Siri is useful? How do you know if Siri did what I actually meant? These metrics are difficult to capture in the wild and if you don’t go looking for these answers, you will never find them.

Nobody’s Watching Meeeeeee

Back when Steve Jobs was around, he was often called arrogant, that he had a “reality distortion field”, and that he famously eschewed things like focus groups, saying:

It’s really hard to design products by focus groups. A lot of times, people don’t know what they want until you show it to them.

And you know? He’s totally right. You can’t design-by-committee the iPhone, the Mac, Google Search, or any of the other myriad magical things we all use on a daily basis. There’s no metric you can point to that says “The iPhone 1.0 will be a success!”.

Steve had a supernatural read on what people would want once they saw it. “We don’t do focus groups” means: We understand users more deeply than they can articulate themselves. He absolutely made things that people want. Steve also had the exorbitant privilege of being the triumphant returning founder. He could cut through the organization to make it do whatever he wanted. Here’s how that looks in practice:

I am quite disappointed at how Windows Usability has been going backwards and the program management groups don’t drive usability issues.

Let me give you my experience from yesterday.

I decided to download Moviemake and buy the Digital Plus pack so I went to Microsoft.com. They have a download place so I went there.

The first 5 times I used the site it timed out while trying to bring up the download page. Then after an 8 second delay I got it to come up.

This site is so slow it is unusable.

It wasn’t in the top 5 so I expanded the other 45.

These 45 names are totally confusing. These names make stuff like: C:\Documents and Settings\billg\My Documents\My Pictures seem clear.

They are not filtered by the system I can in on and so many of the things are strange.

I tded scoping to Media stuff. Still no moviemaker. I typed in moviemaker. Nothing. I typed in movie maker.

Nothing.

So I gave up and sent mail to Amir saying - where is this Moviemaker download? Does it exist?

So they told me that using the download page to download something was not something they anticipated

Who in Apple is sending this email for Siri?

Siri has been getting the same “this is embarrassingly bad” feedback since, oh, roughly 2014. Why hasn’t Siri gotten fixed? Where’s the roundtable or apology letter?

Siri’s failures are diffuse. There’s no single giant problem. If Siri just plain old didn’t work (like Apple Maps or Titan), or had major outages (like iWork did), I suspect those would’ve been fixed quickly. Maps was a dramatic, viral failure. A single catastrophic launch moment. Siri has been killing us by a thousand papercuts over more than a decade.

I’m shocked that anyone at Apple leadership couldn’t see how big a problem this is. Siri is embarrassingly bad at things it really ought to nail 100% of the time, and the brand damage there seems more significant than Apple seems to understand.

Leadership Dysfunction

Apple Everyone except a small few missed it, so they could be excused, but just compare how Google quickly re-oriented with Gemini and went from distant to trading first place with the rest of the top 3 providers to Apple’s … current… whatever they are doing. . It took far too long to identify Apple Intelligence was broken. It sounded like a mess.

Even if “everyone” agreed Siri was broken, nobody other than Tim Cook could decree everyone to drop everything and integrate with it. And there’s no evidence he did until it was far too late to do anything about it. The alternative suggests that leadership didn’t know it was an issue. Are they not listening to customers? Are they gathering and being measured upon the wrong metrics? What allowed Siri’s terrible performance to fester for so long?

How to Fix (Some Of) This

Something needs to replace Steve Jobs’s ability to look at a product and say “This isn’t working”. Tim Cook isn’t that person (and he never claimed to be) but Apple Or if it’s there, restructure things so PMs have higher organizational clout. more structured product management and data science teams to close the gap between measurement and assessment.

When I ask for the weather tomorrow, do I mean what’s literally the high temp and the low temp? It feels like product managers at Apple don’t use Apple products very much because how could they leave this broken for so long? Or, they’ve tried and tried and something out of their control is blocking them from solving this kind of problem. From the outside it’s impossible to know. Could you look at other on-device signals, like: Do I then ask Siri “What’s the weather at 2pm?” Do you see me opening up the weather app, tapping into tomorrow’s detail? Can you access my sleep timer so you know when I go to bed and when I wake up to better tailor the response?

When I ask an ambiguous question like “Hey Siri, play Toxic”, should Siri/Apple Music jump to Britney Spears or can it do an on-device check first? Is it more likely I meant the song I have listened to recently, have in my library, and starred it, or is it more likely I meant a pop star I hardly listen to? What’s more likely? Apple probably already has the data and the algorithms to make this decision better, yet they’re not using it.

Do more people listen to Britney Spears than BoyWithUke? Undoubtedly. Absent any additional context, Siri made a reasonable, predictable, correct decision. That answer showed up as another +1 in the “Siri’s doing great” dashboard. Apple has ready access to lots of additional context that would tell them (if they were looking) that Siri made the wrong choice here.

Someone should’ve asked ~14 years ago: “How do we know if Siri is actually working?” and been given the resources to figure that out. Maybe it’s more on-device data collection. Maybe it’s more explicit opt-in to gathering more data. Maybe it’s more people listening to users, watching message boards, or simply using Siri day-to-day. Maybe it’s allocating resources to data mine based off of this feedback.

But even then, software doesn’t have the same hard data as hardware. Get used to not having all the data you need to make a decision. Listen to your gut more. Apple doesn’t need another Steve Jobs if it has the way to diffuse his abilities down to the leaf nodes of the organization and then surface the learnings back up to the C-suite.

LLMs?!!?

For a piece about AI it’s strange I haven’t discussed LLMs yet. AI is much broader than LLMs, of course, and data science is a big piece of it. But there are applications of LLMs that can help here.

In my examples above there are signals that Siri successfully responded to my request (probably ticking up some internal metric that makes Siri look successful) but failed at responding to my intent. LLMs are exceptionally good here, and it wouldn’t take a gigantic expensive trillion parameter model to greatly improve Siri’s decisioning here.

I get why Apple doesn’t want to simply turn Siri into a LLM-powered chatbot. Eventually it will be (experiments with ChatGPT and now Gemini are clear evidence we’re going there), but I buy that they believe the time isn’t right. So maybe the LLM is just running on the device, gathering small, already extant data points (“User asked Siri for X. They got Y. They then did Z”), and reporting back “I think Siri failed here: they meant a but got b.”

That could go a long way to providing fuzzy feedback to the product & data teams - giving them the place to start digging into and experimenting from.

Leaving Hope

Apple kept making the choice to ship a product that was terrible at the thing that mattered: understanding what you meant - in part because nobody was in a position to notice, and/or nobody who noticed was in a position to fix it. I get the organizational challenges and philosophy that got them there, but I really hope this change is an opportunity for them to figure out a pragmatic way forward that gives customers the experience they want while maintaining the brand promise of “privacy and things ‘just work’”. They have all the resources necessary to do this - it just takes the willingness to make it so.

Appendix

Privacy-First Architecture

Apple has long-since touted their privacy-first stance. One of the reasons why I’m all-in on Apple’s ecosystem is I do actually believe that they take this more seriously than their competitors. I don’t want to be the product being sold, so I deeply appreciate this and put my money where my mouth is.

This privacy-first stance has been claimed as a major reason why Siri is so bad: models won’t have as much training data to learn on. I deeply question this - commercial models like ChatGPT and Anthropic have not obtained Google does get lots of Android-sourced data but it’s not obvious to me this has helped Gemini in a substantial, differentiable way. and are still wildly successful.

I’m not sure how much this contributes to Siri’s problems - if at all - which is why I put it here in the Appendix. Not so much as to put forth the argument that their privacy-first architecture is the cause, but speculating that it isn’t.

A company that has figured out how to safely leverage health data can certainly figure out how to securely manage privacy in the age of AI. They have a large audience using health features; in early Apple Health research over 400,000 people enrolled in the first Apple Heart Study and over 350,000 people are currently contributing to research studies via Apple Research app.

Privacy shouldn’t be a blocker to improving Siri or Apple Intelligence. If it is, that feels more like a lack of desire to solve it than a real technical blocker.